Prerequisites¶

. Install:

pip install xdggsWhat this notebook does¶

Loads the Healpix_ Zarr riomar data

visualise with xdggs

Steps¶

Setup & load inputs

Load the Zarr file

**visualise **

Output dataset conventions¶

meta data shoudl be updated to aligne fair practice?

Step 1 — Setup & load zarr file¶

##on HPC

import os

import psutil

from dask.distributed import Client, LocalCluster

from pathlib import Path

import xarray as xr

import numpy as np

import xdggs

import healpix_geo

# pick a fast local path on the compute node

local_dir = (

"/tmp"

)

local_dir = str(Path(local_dir) / "dask-scratch")

print("Using Dask local_directory:", local_dir)

print("=== Starting local Dask cluster (auto-sized) ===")

cpu = os.cpu_count() or 1

total_gb = psutil.virtual_memory().total / (1024**3)

# Good “use most, but not all” defaults:

n_workers = cpu # ~1 worker per CPU core

threads_per_worker = 1 # best for numpy-heavy compute

memory_limit_gb = (total_gb * 0.85) / n_workers # leave ~15% headroom

memory_limit = f"{memory_limit_gb:.2f}GB"

n_workers=32

cluster = LocalCluster(

n_workers=n_workers,

# threads_per_worker=threads_per_worker,

processes=True,

memory_limit=memory_limit,

local_directory=local_dir, # <--- THIS FIXES THE WARNING

dashboard_address=":8787",

)

client = Client(cluster)

print("Dask dashboard:", client.dashboard_link)

print("\n=== Dask cluster resources ===")

info = client.scheduler_info()

workers = info["workers"]

total_threads = sum(w["nthreads"] for w in workers.values())

total_mem_gb = sum(w["memory_limit"] for w in workers.values()) / (1024**3)

print(f"Workers: {len(workers)}")

print(f"Total threads: {total_threads}")

print(f"Total memory limit: {total_mem_gb:.2f} GB")

# Optional: per-worker details

#for addr, w in workers.items():

# print(f"- {addr}: nthreads={w['nthreads']}, mem_limit={w['memory_limit']/1e9:.2f} GB »)import xarray as xr

import xdggs

from dask.distributed import Client, LocalCluster

cluster = LocalCluster()

client = Client(cluster)

clientLoading...

zarr_hp_file_path = "https://data-fair2adapt.ifremer.fr/riomar-zarr_tina/small_hp.zarr"

# zarr_hp_file_path = "/scale/riomar-zarr_tina/small_hp.zarr"

# zarr_hp_file_path = "https://data-fair2adapt.ifremer.fr/riomar-zarr_tina/test3.zarr"

# zarr_hp_file_path = "./riomar-zarr_tina/test3.zarr"

zarr_hp_file_path = "./riomar-zarr_tina/Y2023.zarr"

ds = xr.open_zarr(zarr_hp_file_path)

# ds = ds.persist().sortby("time_counter")

ds/var/folders/zf/53jxd5nj2j3dmfjqpx41p24c0000gn/T/ipykernel_43868/25675482.py:7: RuntimeWarning: Failed to open Zarr store with consolidated metadata, but successfully read with non-consolidated metadata. This is typically much slower for opening a dataset. To silence this warning, consider:

1. Consolidating metadata in this existing store with zarr.consolidate_metadata().

2. Explicitly setting consolidated=False, to avoid trying to read consolidate metadata, or

3. Explicitly setting consolidated=True, to raise an error in this case instead of falling back to try reading non-consolidated metadata.

ds = xr.open_zarr(zarr_hp_file_path)

Loading...

# Make time monotonic (required for resample)

ds = ds.sortby("time_counter")

# Daily mean

ds_mean_daily = ds.resample(time_counter="1D").mean(keep_attrs=True)

ds_mean_dailyLoading...

(

ds_mean_daily.compute()

.pipe(xdggs.decode)

.dggs.assign_latlon_coords()

.dggs.explore(alpha=0.3)

)/Users/annef/mamba/envs/riomar/lib/python3.12/site-packages/jupyter_client/session.py:727: UserWarning: Message serialization failed with:

Out of range float values are not JSON compliant: nan

Supporting this message is deprecated in jupyter-client 7, please make sure your message is JSON-compliant

content = self.pack(content)

Loading...

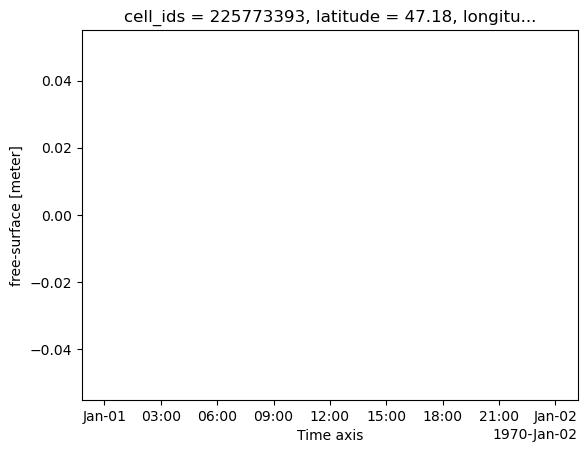

2.2 plot time series of one point¶

chose one point and make time series plot

import healpix_geo as hpg

# 1) Midpoint of your bbox

lon_min, lon_max = -2.8, -1.97333

lat_min, lat_max = 47.04367, 47.31558

lon_mid = (lon_min + lon_max) / 2.0

lat_mid = (lat_min + lat_max) / 2.0

print("midpoint:", lon_mid, lat_mid)

# 2) Pick up the level from the dataset's cell_ids attribute

level = ds.cell_ids.attrs["level"]

# Common attribute keys people use — adjust if your file uses

# another name

print("HEALPix level:", level)

# 3) Compute the Helix cell_id for that lon/lat using healpix_geo

# (function names vary between packages/versions)

cell_id = hpg.nested.lonlat_to_healpix(lon_mid, lat_mid, depth=level, ellipsoid="WGS84")

print("HEALPix cell_id:", cell_id)

# 4) Select that cell from ds

# If cell_ids is a coordinate along dimension "cell_ids",

# this should work:

ds_point = (

ds.sel(cell_ids=cell_id).pipe(xdggs.decode).dggs.assign_latlon_coords().compute()

)midpoint: -2.386665 47.179625

HEALPix level: 13

HEALPix cell_id: [225773393]

import matplotlib.pyplot as plt

ds_point.zeta.plot()

plt.savefig("zeta.png", dpi=300, bbox_inches="tight")

plt.close()