from IPython.core.magic import register_cell_magic

@register_cell_magic

def skip(line, cell):

returnimport os

os.environ["SHELL"] = "/bin/bash"# open_virtual_dataset version using

# ds.virtualize.to_icechunk(session.store)

# ca cree l'archive dans le storage indiquee

# plus haut

ICECHUNK_CATALOG = "/scale/project/lops-oh-fair2adapt/fpaul/tmp/riomarr.zarr"

# or, http urls inside (!timeout si depuis ifremer...):Note : pattern usuel requis :

toujours : Repository.open(...) -> session -> session.store -> xr.open_zarr(store).

Catalogue icechunk local, ouverture avec open_dataset pour usage_courant¶

import icechunkconfig = icechunk.RepositoryConfig.default()

config.set_virtual_chunk_container(

icechunk.VirtualChunkContainer(

"file:///scale/project/lops-oh-fair2adapt/riomar/GAMAR/",

icechunk.local_filesystem_store("/scale/project/lops-oh-fair2adapt/riomar"),

)

)outpath = "/scale/project/lops-oh-fair2adapt/fpaul/tmp/"

storage = icechunk.local_filesystem_storage(os.path.join(outpath, "riomar.zarr"))

storage 2025-12-11T13:55:40.946395Z WARN icechunk::storage::object_store: The LocalFileSystem storage is not safe for concurrent commits. If more than one thread/process will attempt to commit at the same time, prefer using object stores.

at icechunk/src/storage/object_store.rs:80

ObjectStorage(backend=LocalFileSystemObjectStoreBackend(path=/scale/project/lops-oh-fair2adapt/fpaul/tmp/riomar.zarr))credentials = credentials = icechunk.containers_credentials(

{

"file:///scale/project/lops-oh-fair2adapt/riomar/GAMAR/": None,

}

)

repo = icechunk.Repository.open(

storage,

config,

authorize_virtual_chunk_access=credentials,

)

# cree le dossier dans storage, qui ne doit pas

# exister. Ici :

# /scale/project/lops-oh-fair2adapt/fpaul/tmp/

# riomar.zarrrs = repo.readonly_session(branch="main")

rs<icechunk.session.Session at 0x7f265dfa5a50>import xarray as xr

## Ouverture = instantané

vds_combined = xr.open_dataset(

rs.store, engine="zarr", chunks={}, decode_times=True, consolidated=False

)

print("✅ Combined shape:", vds_combined.temp.shape)

display(vds_combined.chunk)✅ Combined shape: (201600, 40, 838, 727)

<bound method Dataset.chunk of <xarray.Dataset> Size: 79TB

Dimensions: (time_counter: 201600, s_w: 41, s_rho: 40, y_rho: 838,

x_rho: 727, y_u: 838, x_u: 726, y_v: 837, x_v: 727,

axis_nbounds: 2)

Coordinates: (12/19)

* time_counter (time_counter) datetime64[ns] 2MB 2020-02-01T00:52:3...

* s_w (s_w) float32 164B -1.0 -0.975 -0.95 ... -0.025 0.0

* s_rho (s_rho) float32 160B -0.9875 -0.9625 ... -0.0125

* y_rho (y_rho) float32 3kB 0.0 0.0 0.0 0.0 ... 0.0 0.0 0.0 0.0

* x_rho (x_rho) float32 3kB 0.0 0.0 0.0 0.0 ... 0.0 0.0 0.0 0.0

* y_u (y_u) float32 3kB 0.0 0.0 0.0 0.0 ... 0.0 0.0 0.0 0.0

... ...

nav_lon_rho (y_rho, x_rho) float32 2MB dask.array<chunksize=(838, 727), meta=np.ndarray>

nav_lon_u (y_u, x_u) float32 2MB dask.array<chunksize=(838, 726), meta=np.ndarray>

nav_lon_v (y_v, x_v) float32 2MB dask.array<chunksize=(837, 727), meta=np.ndarray>

time_instant (time_counter) datetime64[ns] 2MB dask.array<chunksize=(1,), meta=np.ndarray>

time_instant_bounds (time_counter, axis_nbounds) datetime64[ns] 3MB dask.array<chunksize=(1, 2), meta=np.ndarray>

time_counter_bounds (time_counter, axis_nbounds) datetime64[ns] 3MB dask.array<chunksize=(1, 2), meta=np.ndarray>

Data variables: (12/14)

Tcline (time_counter) float32 806kB dask.array<chunksize=(1,), meta=np.ndarray>

Cs_w (time_counter, s_w) float32 33MB dask.array<chunksize=(1, 41), meta=np.ndarray>

Vtransform (time_counter) float32 806kB dask.array<chunksize=(1,), meta=np.ndarray>

Cs_r (time_counter, s_rho) float32 32MB dask.array<chunksize=(1, 40), meta=np.ndarray>

hc (time_counter) float32 806kB dask.array<chunksize=(1,), meta=np.ndarray>

salt (time_counter, s_rho, y_rho, x_rho) float32 20TB dask.array<chunksize=(1, 40, 838, 727), meta=np.ndarray>

... ...

theta_s (time_counter) float32 806kB dask.array<chunksize=(1,), meta=np.ndarray>

sc_r (time_counter, s_rho) float32 32MB dask.array<chunksize=(1, 40), meta=np.ndarray>

theta_b (time_counter) float32 806kB dask.array<chunksize=(1,), meta=np.ndarray>

u (time_counter, s_rho, y_u, x_u) float32 20TB dask.array<chunksize=(1, 40, 838, 726), meta=np.ndarray>

v (time_counter, s_rho, y_v, x_v) float32 20TB dask.array<chunksize=(1, 40, 837, 727), meta=np.ndarray>

zeta (time_counter, y_rho, x_rho) float32 491GB dask.array<chunksize=(1, 838, 727), meta=np.ndarray>

Attributes: (12/39)

name: GAMAR_GLORYS_1h_inst

description: Created by xios

Conventions: CF-1.6

title: GAMAR_GLORYS

rst_file: croco_rst.nc

grd_file: croco_grd.nc

... ...

gamma2_expl: Slipperiness parameter

x_sponge: 0.0

v_sponge: 0.0

sponge_expl: Sponge parameters : extent (m) & viscosity (m2.s-1)

SRCS: main.F step.F read_inp.F timers_roms.F init_scalars.F ini...

CPP-options: REGIONAL GAMAR MPI TIDES OBC_WEST OBC_NORTH XIOS USE_CALE...>temp = vds_combined.temp.isel(time_counter=700, s_rho=0)

print("Mean:", temp.mean().compute().item()) # Force compute

tempMean: 7.816941738128662

Loading...

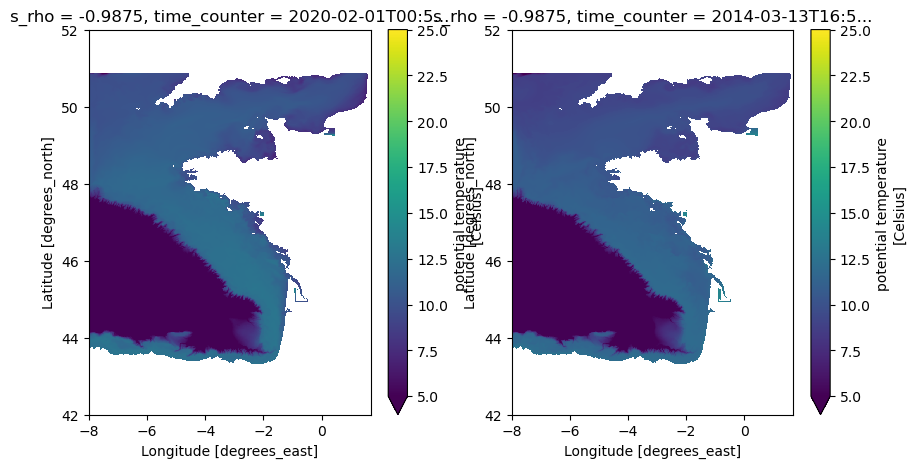

from matplotlib import pyplot as plt

plt.subplots(1, 2, figsize=(10, 5))

plot_kwargs = {

"x": "nav_lon_rho",

"y": "nav_lat_rho",

#'cmap': 'RdBu_r',

"ylim": (42, 52),

"vmin": 5,

"vmax": 25,

}

plt.subplot(1, 2, 1)

vds_combined.isel(time_counter=0).temp.isel(s_rho=0).plot(**plot_kwargs)

plt.subplot(1, 2, 2)

vds_combined.isel(time_counter=1000).temp.isel(s_rho=0).plot(**plot_kwargs)

Catalogue Icechunk local, ouverture avec open_zarr¶

(pour analyse des manifests, réécriture des paths, divers..)

FP : bof, en fait je fais pas, y’a aucun interet dans mon cas

Différences pratiques¶

| Critère | open_dataset(..., engine="zarr") | open_zarr(...) |

|---|---|---|

| Flexibilité | ✅ Plus d’options (decode_cf, mask_and_scale...) | ❌ Zarr-only |

| Décodage CF | ✅ Temps, unités, scaling automatique | ❌ Métadonnées Zarr brutes |

| Chunks | ✅ chunks={} force lazy loading | ✅ chunks="auto" recommandé |

| Store objet | ✅ rs.store directement | ✅ rs.store directement |

Catalogue Icechunk http¶

TODO : A FAIRE !!!

attention, ca c’est probablemnet d’actualité encore... :

Note Fred : ca marche a l’exterieur d’ifremer, mais pas depuis le hpc ifremer par exemple (timeout!)...

Avec Colab, (mettre url si ok) :

# NOTE FP : a ce jour, il ne semble pas possible

# d'utiliser par http le catalogue icechunk

# virtualizarr, contrairement a kerchunk ...

# En local ok, mais pas en remote http, sauf a

# utiliser du s3 ou des trucs du style